Structured Role Intelligence System: A Case Study in Decision Infrastructure

Using AI systems to interpret signal in a high-noise environment

Modern Job Search Context

Modern job search environments produce an overwhelming volume of signals.

Hundreds of roles appear across platforms

Titles vary widely

Responsibilities are inconsistently defined

Organizational structures are rarely visible from the outside

Posting dates are often missing, making it difficult to determine whether opportunities are current or inactive

Some roles remain visible long after they have been filled

At first glance, this appears to be an information deficit. It is not.

The challenge is not access to information. The challenge is interpreting signal within noise.

Silence — the absence of visible response — is often the most destabilizing signal.

Without structure, silence becomes difficult to interpret, creating uncertainty that feeds repeated checking, hesitation, and second-guessing. A structured method for recording and revisiting the possible causes of silence stabilizes its meaning across time. Although silence appears to mean nothing, it becomes useful when structure allows for disciplined interpretation.

Activity without structure

Earlier job searches relied heavily on intuition and memory — scanning listings, making quick judgments, tracking opportunities loosely, and revisiting decisions later.

This approach felt active, but over time revealed structural weaknesses:

Similar roles were evaluated differently across time.

Follow-up timing became inconsistent.

Strong opportunities were occasionally lost in the flow of new inputs.

Weak opportunities consumed attention that could have been directed elsewhere.

These conditions created cognitive friction — not because the work itself was conceptually difficult, but because the environment lacked structure.

That friction produced emotional instability: overwhelm, frustration, and confusion. These reactions encouraged repeated activity without meaningful progress — a pattern that felt like sustained effort but often functioned as productive avoidance.

In hindsight, that pattern avoided confronting the deeper structural problem: the absence of an intentional system.

Motion without progress

The modern job search contains many moving parts:

Finding opportunities

Evaluating roles

Tailoring applications

Tracking follow-up timelines

Monitoring silence and responses

Engaging with organizations beyond posted roles

Building portfolio artifacts

Developing public-facing professional identity

Each task made sense individually. Together, they felt disconnected. Without sequencing, it was difficult to determine where to begin. The natural tendency became: Find → Apply → Repeat

This created motion — but not progress.

Reframing Job Search As An Operational System

It required discipline to step back and recognize that these activities were not separate tasks. They were components of a larger operational system. The realization came gradually.

The job search itself was behaving like an operational system under load:

New inputs arrived continuously

Decisions were interdependent

Timing mattered

Sequencing mattered

Memory mattered

Small inconsistencies compounded into larger inefficiencies. The problem was not effort. The problem was decision instability. What was needed was not a checklist. Not a tool. Not a faster workflow.

The first objective was stability — not speed.

Using AI to navigate uncertainty

What was needed was decision infrastructure — a structured method for detecting, qualifying, acting on, and monitoring opportunities in a consistent way.

Artificial intelligence did not create this need. It provided the scaffolding that made the solution possible.

Used without structure, AI increases noise. Used with structure, AI stabilizes reasoning.

The system that emerged was not designed to eliminate uncertainty. It was designed to operate reliably within it. That realization marked the transition from reactive activity to intentional system design.

From fragmented tasks to structured reasoning

The Role Intelligence System was not developed as a collection of isolated prompts. It emerged as a structured response to instability within a high-noise decision environment already described.

Rather than treating discovery, evaluation, application, and follow-up as separate tasks, the system was designed as a coordinated reasoning structure. This system established sequencing, constraint boundaries, and persistent memory. It transformed fragmented activity into structured decision flow, moving from signal detection to structured evaluation, from evaluation to targeted action, and from action to long-term learning.

Individual tasks became coordinated system behavior. Structured flow allowed decisions to become repeatable, comparable, and cumulative rather than episodic.

Artificial intelligence did not replace judgment within this system. It provided scaffolding that stabilized reasoning across time. The result was not a faster workflow. The result was a decision system capable of operating under uncertainty without losing consistency.

Architecture of the Role Intelligence System

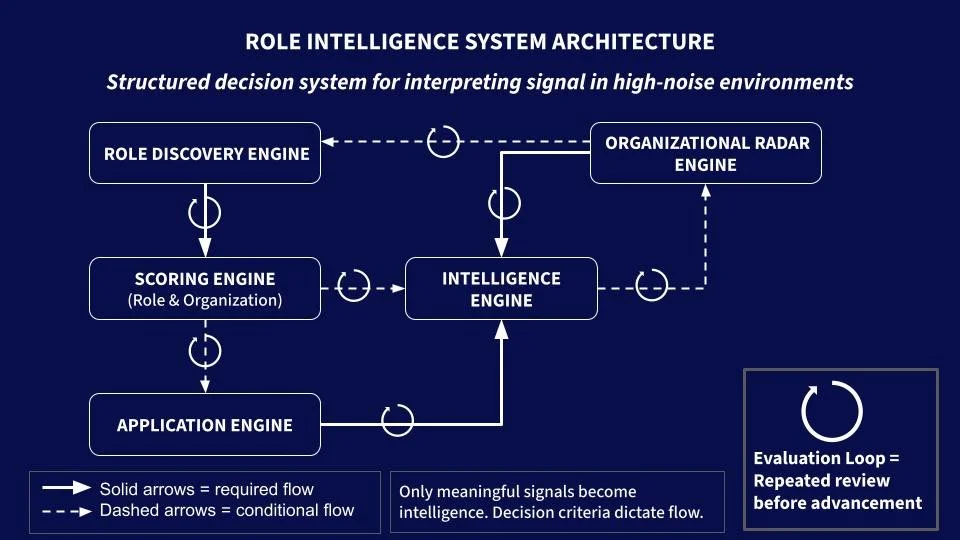

The reasoning system operates with five interconnected engines:

Role Discovery Engine

Role & Organization Scoring Engine

Application Engine

Organizational Radar Engine

Intelligence Engine

Each engine performs a distinct function within the broader decision process, transforming incoming signals into structured action and recorded outcomes.

The five engines form a closed-loop system with both required and conditional paths. Together, they form a coordinated decision environment capable of transforming fragmented activity into structured reasoning.

Selective Intelligence Generation Through Structured Evaluation

Roles and organizations move through evaluation cycles. Advancement between engines occurs only after evaluation cycles confirm alignment. Not all discovered roles advance to scoring. Many exit during early evaluation cycles before formal classification occurs.

Intelligence emerges from interactions between role signal and organizational signal over time.

Caption. Solid arrows represent required system flows. Dotted arrows represent conditional advancement based on signal strength and structural alignment.

Only meaningful signals are promoted into the Intelligence Engine, which acts as operational system memory. The Organizational Radar Engine operates parallel to the Role Discovery Engine — preserving high-signal organizations and guiding future discovery focus.

Together, these five engines form a closed-loop intelligence system capable of transforming fragmented activity into structured decision continuity.

The engines that power the system

While the Role Intelligence System defines the possible sequences of reasoning, each engine performs a distinct operational function. Together, the five engines form a coordinated system that transforms ambiguous signals into structured decisions and recorded outcomes.

These engines were implemented through prompt-based interfaces, but their real value lies in their decision role — not their textual form.

Role Discovery Engine: Identifying roles under constraint

Primary Function:

Detect structurally viable roles within high-noise environments.

Operational Role:

Translate broad opportunity environments into a focused candidate set aligned with defined structural conditions.

Key Constraints:

Remote compatibility requirements

Organizational maturity indicators

Cross-functional positioning signals

Evidence of operational complexity

Role proximity to decision-making structures

Output Type:

Shortlist of viable roles

Initial organizational metadata

Candidate role context

Decision Contribution:

Reduces discovery noise and establishes the initial signal pool evaluated for progression to structured scoring.

Discovery outputs enter evaluation loops before advancing to scoring. Many candidates exit the system at this stage. This evaluation boundary functioned as the system’s first decision gate. It determined which discovered roles were permitted to advance into formal scoring and which were intentionally allowed to exit without further analysis. This gate preserved analytical capacity by preventing exploratory noise from entering structured classification.

This engine converts many possible roles into fewer interpretable candidates, allowing for focused attention and effort.

Role & Organization Scoring Engine: Qualifying and prioritizing roles

Primary Function:

For roles and organizations that pass the discovery evaluation loop - determine structural alignment between opportunity conditions and operational capability.

Operational Role:

Transform evaluated candidates into structured scoring outputs that enable comparison across time.

Key Constraints:

Role archetype classification

Organizational alignment indicators

Decision proximity signals

System ownership expectations

Functional exposure breadth

Operational complexity stage

Output Type:

Role classification

Fit prioritization tier

Structured evaluation record

Decision rationale documentation

Decision Contribution:

Creates comparability across roles and prevents inconsistent judgment across time.

This engine converts candidate roles into prioritized decisions. Scoring occurs only after candidates pass initial evaluation loops confirming structural viability. A second evaluation boundary operated at the scoring-to-application transition. Only roles demonstrating sufficient structural alignment and strategic priority advanced to execution. Many scored roles remained recorded but inactive, preserving signal without triggering premature action.

Application Engine: Executing under structured alignment

Primary Function:

Translate structured decisions into targeted execution.

Operational Role:

Align application materials with verified structural insights generated during evaluation.

Key Constraints:

Organizational problem diagnosis

Candidate–organization alignment

Narrative positioning logic

Material customization thresholds

Output Type:

Tailored application materials

Role-specific narrative framing

Execution-ready communication artifacts

Decision Contribution:

Ensures that execution reflects structured reasoning rather than reactive effort.

Before submission, application materials passed through final evaluation cycles to confirm narrative alignment and structural accuracy. Following submission, execution events transitioned into the Intelligence Engine, where outcomes were recorded as measurable signals within persistent system memory.

This engine converts approved decisions into targeted action.

Organizational Radar Engine: Predicting future opportunities

Primary Function:

Identify and track organizations likely to generate future opportunities.

Operational Role:

Extend discovery beyond posted roles by detecting organizations demonstrating strong structural signals.

Radar operates as a parallel discovery system, generating ongoing organizational intelligence that may not yet be visible through role postings. Organizations entered Radar only after evaluation cycles confirmed persistent structural alignment. Signals recorded within the Intelligence Engine were reviewed periodically to determine whether organizations warranted promotion into long-term tracking. This boundary preserved Radar clarity by ensuring that only validated organizational signals accumulated over time.

Organizations may enter Radar through two pathways:

Direct detection: External discovery of promising organizations that meet defined criteria

Promotion events: Elevation of organizations following meaningful interaction signals, such as thoughtful application design, aligned content signals, or disciplined communication patterns

Key Signals Observed:

Evidence of structured organizational thinking

Thoughtful hiring design

Clear operational reasoning

Alignment between role framing and organizational behavior

Output Type:

Organization watchlists

Promotion records

Structural signal observations

Organizational change tracking

Decision Contribution:

Preserves high-signal organizations independently of individual role outcomes and supports proactive future opportunity discovery.

This engine converts emerging organizational signals into future opportunity awareness.

Intelligence Engine: Building operational memory

Beyond defining the sequence of reasoning, the five engines comprising the Role Intelligence System served specific operational functions. The system required persistent memory to maintain stable interactions across engines and across time. Without recorded structure, each decision would require fresh reasoning. With recorded structure, decisions could accumulate, enabling pattern recognition and continuous refinement.

The Intelligence Engine functions as qualified memory — recording only validated signals that meet defined structural thresholds.

Primary Function:

Capture structured records of role and organization-specific evaluations, application outcomes, and interactions. These structured records form the persistent memory layer of the system.

Operational Role:

Record application-level and emerging organization-level signals as measurable events, enabling interpretation of silence, rejection, response patterns, and organizational changes.

Key Signals Recorded:

Estimated response windows

Follow-up timing intervals

Response latency duration

Estimates for silence-as-rejection time window

Outcome classification events

Outreach events and outcomes

Organizational status, change, and growth

Output Type:

Role response timing records

Follow-up triggers

Outcome classification data

Historical role-level and organization-level logs

Decision Contribution:

Creates structured memory that enables interpretation of delayed feedback and supports pattern recognition across applications and organizations.

This engine converts silence and outcomes into structured operational memory.

Operational memory systems: Supporting decision continuity using persistent structures

The Intelligence Engine was implemented through two primary structures:

Role-level tracking framework

Organization-level tracking framework

Together, these structures transformed short-term activity into long-term learning.

(1) Role Tracker: accumulating role-level decision memory

The Role Tracker served as the primary operational interface — the location where structured role-level decisions were recorded and monitored.

Entries were created only after evaluation thresholds were met, ensuring that memory contained validated signals rather than exploratory noise.

Rather than functioning as a simple task log, the tracker recorded decision structure.

Key recorded elements included:

Role identification and organizational context

Role archetype classification

Reporting structure and system ownership signals

Decision proximity indicators

Functional exposure characteristics

Operational complexity stage

Application timing and response intervals

Follow-up thresholds

Outcome classification signals

These elements created a structured historical record of decision behavior.

Over time, the Role Tracker became an instrumentation surface — a system that captured signals rather than merely tracking actions. Silence became measurable. Responses became comparable. Outcomes became interpretable across time.

This structure enabled the detection of patterns such as:

Response timing variability across organizations

Structural signals associated with early rejection

Conditions associated with extended review cycles

Alignment signals preceding successful engagement

The Role Tracker converted individual applications into accumulated decision intelligence.

(2) Organization Tracker: Accumulating organization-level memory

While the Role Tracker captured role-specific activity, the Organizational Radar tracked organization-level signals across longer time horizons.

Radar organizations entered through two primary pathways:

Direct Discovery

Recorded organizations identified through targeted exploration to enable ongoing observation — often before suitable roles were posted. These entries reflected structural alignment rather than immediate opportunity.

Promotion Events

Recorded organizations only after evaluation thresholds were met, ensuring that memory contained validated signals rather than exploratory noise.

These signals included:

Thoughtful application design

Clear operational reasoning within job descriptions

Structured evaluation behavior

Evidence of disciplined internal thinking

Promotion allowed strong organizational signals to be preserved independently of individual role outcomes.

This ensured that high-quality organizations remained visible even when specific roles were not yet available.

Radar entries recorded structured indicators such as:

Industry and operational domain

Organizational stage and structural pressure

Observed hiring behavior patterns

Structural readiness indicators

Review cadence recommendations

Risk flags and signal confidence levels

These elements supported long-term awareness of evolving opportunity landscapes.

Organization Tracker transformed organizations from isolated encounters into persistent strategic signals.

(3) External Codebook: Framework for standardizing and interpreting records

In addition to the operational trackers, the system relied on an external codebook to standardize interpretation logic.

The codebook defined:

Field definitions and classification criteria

Scoring frameworks

Decision threshold logic

Outcome interpretation rules

Maintaining the codebook as a separate reference allowed classification logic to evolve independently of operational data collection. This separation preserved interpretive consistency while allowing classification logic to evolve. Over time, the codebook became a reference layer to ensure that decisions remained comparable even as the system expanded.

(4) System evolution records: Versioning for historical learning evidence

Earlier versions of both prompts and tracker structures were preserved rather than discarded. These historical records served as evidence of system iteration and refinement.

Schema revisions reflected emerging insights, including:

Expanded classification fields

Refined evaluation thresholds

Improved outcome interpretation logic

Enhanced organization-level tracking structures

Maintaining prior versions allowed design decisions to remain visible, reinforcing transparency in how the system matured over time. This preserved the developmental history of the system — not just its final state.

System Benefits of Stabilizing Memory Infrastructure

These memory structures transformed the system from a sequence of actions into a durable decision environment. Instead of reacting to each opportunity independently, the system accumulated structured understanding across time.

This accumulation created stability:

Decisions became comparable.

Signals became interpretable.

Learning became persistent.

Artificial intelligence supported these structures by enabling consistent interaction with defined logic — but the system itself depended on memory. Memory created continuity. Continuity created learning. Learning created confidence in decision direction.

Operational Rhythm: How Engines Were Used in Practice

Although the system architecture defined possible flows, the system did not operate automatically. Each transition between engines required deliberate review and interpretation.

A typical cycle began with structured discovery. The Role Discovery Engine generated candidate roles under defined constraints. These outputs were not treated as decisions. They became material for evaluation.

Many candidates exited the system during these early review cycles, never advancing to formal scoring. During these sessions, role descriptions were examined for structural signals, alignment indicators, and hidden risks. Some roles were rejected immediately. Others advanced to deeper analysis.

Strong candidates that survived early evaluation cycles were processed through structured scoring logic. These outputs were reviewed again before advancing to execution. Decisions were rarely made in a single pass. Instead, multiple cycles of review clarified alignment before action occurred.

Applications were generated only after evaluation confirmed structural fit. Draft materials were reviewed and refined iteratively before submission. This ensured that execution reflected verified reasoning rather than reactive effort.

Parallel to role-level work, organizations demonstrating strong structural signals were promoted into Radar tracking. These organizations were revisited across time, allowing emerging signals to influence future discovery cycles.

This evaluation rhythm — discovery, scoring, refinement, execution, and reflection — repeated across many cycles. Over time, repeated interaction between engines stabilized judgment and strengthened signal interpretation.

The system did not function as an automated workflow. It functioned as a structured reasoning environment in which human judgment guided movement between stages.

Dynamic Interactions Across Engines: How Signals Move Through the System

While the system defined the sequence of reasoning and the engines established distinct operational functions, the system derived its stability from how those engines interacted across time.

The interaction logic governing signal movement determined when observations became memory, when decisions triggered action, and when organizations transitioned from isolated encounters into persistent intelligence.

Rather than functioning as a linear workflow, the system operated as a conditional decision environment. Signals advanced, branched, or accumulated based on structured thresholds.

System entry — discovery under constraint

Signal identification without memory creation

Roles entered the system through structured discovery processes designed to filter high-noise environments into manageable candidate sets. Discovery alone did not create memory. Instead, discovery functioned as a signal intake mechanism, identifying potential opportunities while preserving flexibility to discard low-signal observations without accumulating noise.

Discovery outputs entered structured evaluation loops, where signal validity was assessed before any advancement to scoring. This separation ensured that early exploration remained flexible while long-term memory remained disciplined. Discovery converted many potential signals into candidates for structured evaluation, only a subset of which advanced to scoring.

Qualification — evaluation and scoring as decision gateway

Threshold logic and memory creation

Evaluation functioned as the primary decision gateway within the system. Formal scoring occurred only after repeated evaluation cycles confirmed structural alignment. At this stage, each opportunity was examined under defined structural criteria, including organizational alignment, decision proximity, and operational complexity indicators.

Only opportunities meeting defined organizational alignment thresholds generated persistent records within the Role Tracker. This qualification step ensured that memory contained validated signals rather than exploratory noise. Evaluation determined whether candidates deserved formal scoring, while scoring converted qualified candidates into structured decision records.

This moment — the transition from evaluation to recorded memory — marked the point at which observation became structured operational knowledge.

Execution — conditional advancement to application

Action triggered by verified alignment

Opportunities demonstrating both organizational-level and role-level alignment advanced to structured execution. Execution occurred only after scoring and repeated evaluation confirmed that conditions justified targeted action.

Application materials were generated using structured reasoning outputs, ensuring that communication reflected verified understanding rather than reactive effort. Following execution, application-level outcomes — including silence — were recorded within the Role Tracker as measurable system events. Execution converted validated decisions into recorded action outcomes.

Promotion to radar — organizational signal preservation

Separating organizational fit from role fit

Not all strong organizations produced suitable roles at the time of discovery. When evaluation identified strong organizational alignment but insufficient role-level fit, organizations were promoted into the Organization Tracker. Promotion allowed high-signal organizations to remain visible independent of specific role outcomes.

This mechanism preserved structural opportunity signals that might otherwise have been lost. Promotion converted validated organizational signals into persistent organizational intelligence. This distinction between organizational fit and role fit prevented premature dismissal of strategically valuable environments.

Continuity — organizational radar feedback into discovery

Parallel intelligence supporting future detection

The Organization Tracker operated as a parallel intelligence surface, generating ongoing awareness of structurally aligned organizations. Radar entries produced signals that informed future discovery cycles, enabling the system to prioritize environments demonstrating sustained alignment.

Organizations remained active within the Organization Tracker until signals indicated misalignment, declining signal credibility, or closure of anticipated opportunity windows. When necessary, organizations were archived to preserve signal clarity and maintain strategic focus. Archived organizations remained visible within system memory but were no longer included in active monitoring cycles. This preserved historical signal context without introducing active noise. Radar converted emerging organizational signals into future discovery guidance.

Stability — monitoring as decision continuity

Memory as the foundation of learning

The Intelligence Engine preserved structured records of validated events. Each recorded observation contributed to cumulative understanding across time. Silence, once destabilizing, became measurable. Responses became comparable. Outcomes became interpretable across time. This accumulation transformed delayed feedback into usable intelligence.

Patterns began to emerge:

Response timing variability across organizations

Conditions associated with early rejection

Signals preceding extended evaluation cycles

Alignment characteristics associated with meaningful engagement

Through repeated interaction cycles, the system transitioned from reactive effort to sustained learning. Memory created continuity. Continuity created learning. Learning created confidence in decision direction.

Role Intelligence System Outcomes

Changes after introducing structure

The introduction of structured reasoning and persistent decision memory produced changes that were both operational and cognitive.

Decreased cognitive load

Reduced productive avoidance

Constrained discovery noise

Improved evaluation consistency

Strengthened decision prioritization

Converted silence into interpretable signal

Enabled persistent tracking of organizational signals

Increased confidence through system design

Importantly, these outcomes did not emerge from isolated actions, but from repeated evaluation across structured system cycles. Some effects were immediate. Others emerged gradually as repeated use stabilized behavior across time. These outcomes were not the result of increased effort. They were the result of reduced variability in decision-making. Structure did not make the process faster. It made the process steadier.

Outcome 1 — Decreased cognitive load

Stability enabled system building

The earliest observable change was a reduction in mental friction.

Before structure:

Decision fatigue accumulated quickly

Uncertainty required repeated mental simulation

Loose tracking required constant rechecking

Ambiguous silence created ongoing tension

Introducing structure created psychological stability before operational clarity.

Information remained visible

Timing remained predictable

Progress remained observable

This stability created the conditions necessary to build the system itself. Faith did not emerge from outcomes. It emerged from continuity. Confidence formed gradually through repeated interaction with structured decisions — long before external results appeared. Energy shifted from remembering toward reasoning. That shift made system-building possible.

Outcome 2 — Reduced discovery noise

Structured intake replaced reactive searching

Once cognitive stability was established, discovery behavior began to stabilize.

Role searches became scheduled rather than reactive.

Signal thresholds became consistent rather than situational.

Daily discovery sessions created a predictable rhythm.

Each search session ended with a clear decision boundary: Either a viable opportunity emerged, or no action was required. Many days produced no qualifying roles. That absence was not failure. It became confirmation that signal thresholds were functioning correctly. Discovery shifted from reactive searching to disciplined intake.

Outcome 3 — Improved scoring consistency

Comparability replaced memory dependence

Without structured evaluation logic, similar opportunities were often judged differently depending on timing, context, or cognitive load. Role descriptions are inherently ambiguous. Organizational signals are rarely complete. Memory alone is not sufficient to maintain comparability across time. Structured scoring criteria created a stable comparison framework. Roles were no longer judged against vague impressions. They were evaluated against defined structural signals. Evaluation became less dependent on mood or urgency and more dependent on defined conditions. Consistency replaced variability.

Outcome 4 — Silence became interpretable

Uncertainty became measurable

Silence is one of the most destabilizing signals in unstructured environments. When no response occurs, it is difficult to distinguish between delay, rejection, or inactivity.

Recording response timing transformed silence into measurable data:

Each application established an expected response window

Follow-up thresholds created structured decision points

Soft rejection timelines prevented indefinite uncertainty

Over time, silence lost its ambiguity. It became a measurable interval rather than an emotional unknown.

Patterns began to emerge:

Some organizations rejected quickly when misalignment was clear

Others required extended evaluation cycles

Certain silence patterns correlated with eventual rejection

Others indicated delayed but meaningful engagement

Silence transitioned from uncertainty to signal. That transition significantly reduced emotional strain.

Outcome 5 — Stronger decision prioritization

Energy followed structure

Without persistent tracking, each new opportunity feels equally urgent. Weak signals compete with strong signals. Attention fragments across too many possibilities. Structured prioritization created visible differences between opportunities. Roles were assigned relative position, not just binary status.

This allowed:

High-fit roles to receive sustained attention

Medium-fit roles to remain visible without dominating effort

Low-fit roles to exit the system quickly

Prioritization became intentional rather than reactive. Energy followed structure instead of urgency.

Outcome 6 — Organizations became persistent signals

Opportunity landscapes expanded

In traditional job search workflows, organizations disappear once individual applications conclude. If no role exists at the right moment, the organization fades from view. The introduction of the Organizational Radar Engine changed this dynamic. Organizations demonstrating strong structural signals remained visible even when individual roles were unavailable.

This created:

Long-term awareness of aligned organizations

Early detection of future opportunities

Reduced dependence on chance timing

Organizations became persistent signals rather than temporary encounters.

Outcome 7 — Confidence emerged through content

Confidence was built through creation

Confidence did not appear suddenly. It accumulated gradually through interaction with structured outputs. Creating structured artifacts — trackers, evaluations, narratives, decision logic — made progress visible. Each completed entry reinforced system continuity. Not because outcomes were always successful. But because reasoning became explainable.

At an unexpected moment, parts of the process began to feel engaging rather than exhausting. Structured data collection transformed progress into something observable, creating a feedback loop that made effort feel engaging rather than uncertain. Work that once created pressure began to create momentum. Confidence did not emerge from external validation. It emerged through content.

Outcome 8 — Decreased productive avoidance

Effort became directional

Before structured reasoning was introduced, effort often accumulated without producing clarity.

Activity felt necessary, but not always purposeful

Applications were submitted quickly

Search cycles repeated frequently

Output volume created the appearance of progress

In hindsight, much of this activity functioned as productive avoidance — sustained motion that avoided confronting deeper uncertainty. Without structure, it was difficult to distinguish between necessary effort and misplaced effort.

Structured decision logic changed this dynamic:

Each action became conditional rather than reflexive

Each decision passed through defined thresholds

Each opportunity either advanced or exited the system based on observable criteria.

This reduced the need to act simply to reduce uncertainty. Instead, effort became directional. Energy followed verified alignment rather than emotional urgency. Time previously spent repeating uncertain activity shifted toward interpreting structured signals. Over time, the volume of activity decreased and the relevance of activity increased. Effort became more deliberate. Movement became more meaningful. The system did not reduce effort. It reduced wasted effort.

System Implications: What the Role Intelligence System Made Possible

The role intelligence system was developed in response to a practical need: to stabilize decision-making within a high-noise environment. But over time, its significance extended beyond managing job search complexity. It demonstrated that structured reasoning can transform unstable environments into navigable systems.

Uncertainty did not disappear. Noise did not vanish. Silence did not stop. What changed was the ability to interpret those conditions without destabilizing behavior. The system did not create certainty. It created continuity. Continuity allowed decisions to accumulate rather than reset. Signals became comparable across time. Patterns emerged where previously there had been ambiguity.

Use of AI in the Role Intelligence System

Artificial intelligence did not function as an answer engine within this process. It functioned as structured scaffolding that enabled repeated evaluation with defined reasoning logic. Through disciplined use, AI became a stabilizing collaborator rather than a shortcut generator. The value of the system was not speed. It was reliability. Reliability created confidence. Not because outcomes were guaranteed, but because reasoning became explainable.

Over time, the system demonstrated a broader principle: Complex environments do not require more effort. They require better structure. When structure exists, uncertainty becomes interpretable. When uncertainty becomes interpretable, behavior stabilizes. When behavior stabilizes, learning becomes cumulative. This progression marked the transition from reactive activity to deliberate operation.

The job search environment became more than a sequence of tasks. It became a living decision system capable of absorbing noise, interpreting silence, and preserving signal across time. That transformation did not occur because artificial intelligence replaced judgment. It occurred because structured collaboration made judgment sustainable.

The Broader Lesson

Using AI effectively is less about mastering a tool and more about strengthening core analytical skills:

precise problem definition

explicit constraints

careful evaluation of outputs

iterative refinement

integration of external input without losing authorship

These are the same skills required to design and operate decision systems in complex environments.

AI makes the reasoning process more visible — but the quality of decisions still depends on how that process is structured.